AI rules that protect people and enable innovation

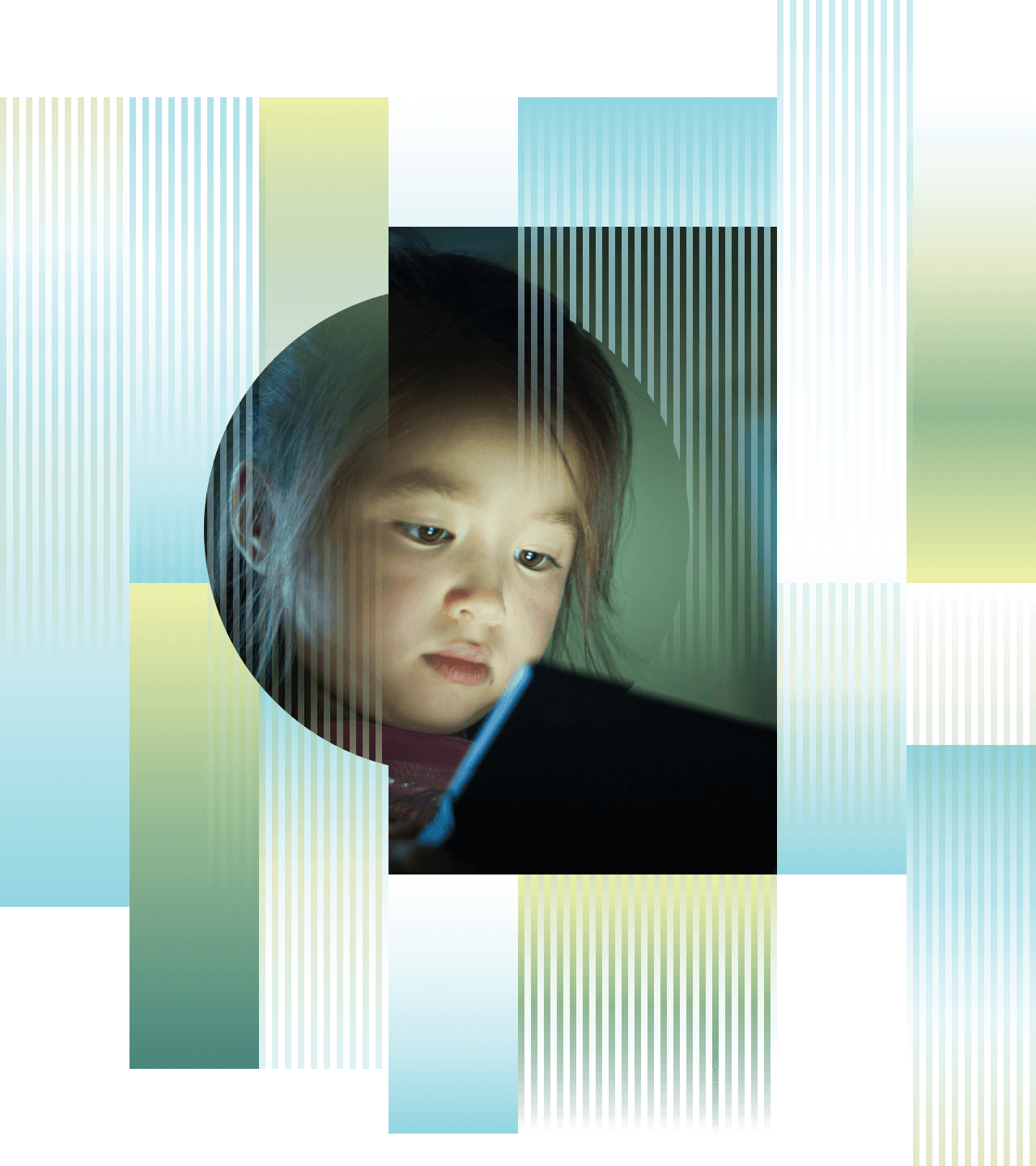

Artificial intelligence is already influencing how we work, learn and make decisions.

AI holds enormous potential to improve lives – from advancing healthcare to helping protect natural ecosystems – but only if it’s developed and used responsibly.

Right now, artificial intelligence is moving faster than the rules needed to protect people and our environment from harm.

Without appropriate guardrails, AI risks widening inequality, weakening privacy and damaging our environment.

Australia has an opportunity to set clear, balanced domestic rules that keep Australians safe from potential harms, enable innovation, and help shape international policy.

SafeAI brings together researchers, policymakers and civil society to help design practical, evidence-based policy to ensure AI benefits people.

Open Letter for Artificial Intelligence

AI has the potential to improve lives, from boosting productivity to transforming healthcare, education and environmental management around the world.

But this technology is advancing faster than our laws and institutions can keep pace.

Without the right safeguards, AI could amplify inequality, deepen bias, erode trust and displace human accountability.

SafeAI

SafeAI is convened by Minderoo Foundation, bringing together leaders committed to clear rules and strong safeguards for artificial intelligence that protect people and our environment, while enabling innovation that benefits humanity.

We believe building a safe AI future requires collaboration across government, academia, industry and civil society.

From understanding risks to advancing practical policy solutions, we are committed to strengthening the evidence, policy and advocacy needed to ensure AI benefits people.

Research and insights

Minderoo Foundation commissioned research to understand Australians' sentiment towards artificial intelligence and regulation.

The research found that Australians see the potential benefits of AI but believe those benefits depend on clear, fair and transparent rules.

The biggest risk to progress isn’t the technology itself, but inaction. Without responsible regulation, public trust will erode and Australians will demand stricter controls even if it limits innovation.

How do people feel about AI?

Joint research by the Alan Turing Institute and the Ada Lovelace Institute finds the UK public remains cautious about AI. People support beneficial uses but want stronger safeguards, clearer accountability and meaningful public involvement in decisions about how AI is developed and used.

How Californians feel about AI

Joint research by TechEquity and Diffusion.Au shows Californians are concerned about AI’s impacts on jobs, privacy and fairness. While recognising potential benefits, the public strongly supports clear rules, worker protections and accountability measures to ensure AI is developed and used in the public interest.

Great (public) expectations

Research by the Ada Lovelace Institute shows strong public support in the UK for fair, safe and accountable AI. People prioritise fairness and social impact over speed or profit, distrust self-regulation by tech companies and support independent regulators with real enforcement powers.

Newsletter subscription

Stay up to date with the latest research, insights, and resources on AI in Australia. Get timely updates on emerging trends, new policies and public attitudes delivered straight to your inbox.